Walking the Tightrope: Maximizing Information Gathering while Avoiding Detection for Red Teams

Analyze the balance between gaining useful information and avoiding detection, detailing recon techniques that can be employed without compromising stealth.

Rob Joyce, who at the time was Head of the NSA's Tailored Access Operations group, had this great quote from a 2016 USENIX talk:

“We put the time in to know that network. We put the time in to know it better than the people who designed it and the people who are securing it. And that’s the bottom line.”

The concept of truly understanding a network can be applied to the commercial side of testing. In the adversary simulation space, you usually land on endpoints with a list of client objectives. Most adversary attack simulations start from a zero knowledge perspective, and a fast ramp-up is needed. If you’re currently not in this space or have taken classes on red teaming, internal discovery is usually a couple of bullet points or hyper-focused on tools. What’s generally covered is in-depth AD exploration and concepts around specific tools like BloodHound or a single recon script. From my experience, I have found this lacking as there is a longer-form process many red teamers take, which is usually not exciting or easy to lab up. The discovery process includes many more things, like reviewing internal documentation, internal websites, and initial host configuration, to name a few.

Host-based discovery is vital in building a picture and attack plan for a target organization. When one lands, taking the time to focus on the host also provides a way to measure the level of maturity of the organization by looking at the controls in place and how they're configured. A lot can be gleaned during host enumeration—it provides context that can dictate the operational tempo and tools used based on those controls. In most engagements, it is not that important, but in red teaming, it is critical, given that the exercise is conducted against mature organizations. We want as much data as possible so that if complete network eviction occurs, we have the information needed to help regain access.

Many new red teamers come from traditional pentest backgrounds. Penetration testing favors shorter-time box assessments and has testers trained to move more quickly and compromise other systems quickly. The mindset shift is not necessarily easy, and it’s common to see newer testers move on from the recon phase quickly when they first make the red teaming jump. By moving too fast, important information can be missed by the host, and it can also introduce risk by creating patterns of behavior that stand out. Things can also go in the other direction and cause analysis paralysis, with being afraid to do anything for fear of getting caught. This is why understanding modern defensive products and detection engineering is now a building block for offensive testing. Building a strong vetted recon methodology will to help new testers gain confidence to better plan and execute testing as well as open up opportunities for them to contribute new and novel points of view.

Planning

We want to constantly explore and improve low-risk, low-noise recon methods and improve tools and techniques to gather valuable information without triggering alerts. We use this approach to inform and build a methodology and playbook that leverage as much directly accessible information as possible to build better attack paths. The goal is to stay within normal user behavior for as long as possible. We are avoiding unnecessary Windows API calls, network traffic, and accessing systems outside the typical bounds of the user. Ultimately, we will increase our level of risk after we complete a round of host-based recon and normal user network behavior.

Steps

The first step in building a methodology is picking a centralized place and a sharable format, for example, Obsidian, which allows knowledge transfer across the team and a central place to pull ideas from when building internal tooling and standard workflows. Obsidian can be substituted for whatever works best for you or your team and ultimately leaves the reader with a choice. Adoption of a standard is the crucial part though, not the underlying tool.

A good second step is finding ways to feed the living methodology such team debriefs. After every engagement, have a team meeting for a fixed length of time to document and review what worked and what didn’t, and feed ideas back into this. If you’re solo or just looking for new inspiration, Twitter, blogs, and other CTI sources can also help evolve and inspire your reconnaissance process.

The third area to focus on is understanding the detection space and to keep that in mind while risk-ranking reconnaissance and future activities. A great place to start is published rule sets such as Sigma, Elastic, and all of the various published resources around Microsoft Sentinel. Knowing what the majority of defenders are looking for will help direct where to stay away from or if that area is worth the extra time and effort into evasion research.

The last area would be building a lab environment to test and validate your offensive hypothesis and integrations you made to your toolsets from the newly built methodology. There are lots of options in this space and several blogs and YouTube videos on the subject. The key components you want include are some sort of SIEM and the ability to capture events via Sysmon or, better yet, access to EDR products you will encounter during testing.

By the end of your planning process and steps to be taken, you should have picked a platform as a shared medium in addition to a couple of internal processes to start both the build out and a plan to validate.

On-Host Recon

Assumptions going into this are that we have an implant running a low-level user and reliable command and control(C2) with the ability to offload files. We can achieve this by leveraging the cloud providers/CDNs/categorized domains or utilizing multiple C2 channels—one for C&C and one for exfil. An additional assumption is that the target system is running an EDR product and ingesting and acting on alerts typical of most organizations having red team engagements performed.

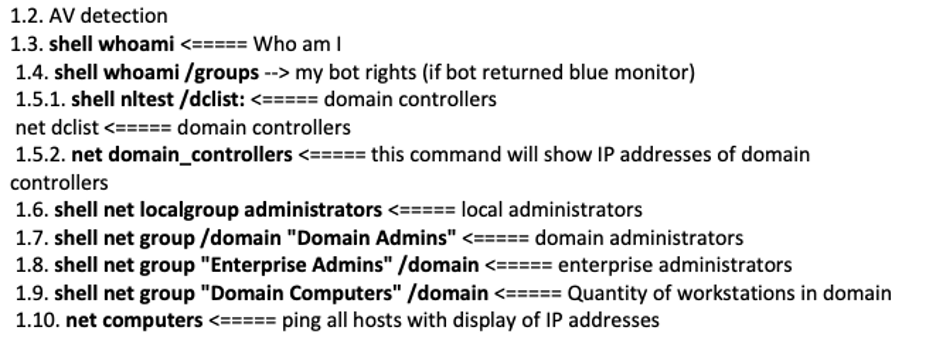

Consider the following example commands from the Conti playbook:

Why would we want to avoid things like the above?

This behavior is known and commonly has detections written for it or a series of commands in a short period. The MITRE evaluations did an excellent job of highlighting host reconnaissance commands used by threat actors in the wild and the EDR's ability to trigger an alert on them. I chose three of the EDR products we most encountered the last year at TrustedSec and included the MITRE evaluation links to the detections, which include screenshots of alerts firing on this behavior.

The above is a specific case of cmd.exe calling net.exe. However, many detections are written from process creation events combined with command line arguments and the parent process. We can avoid calling these binaries directly and live inside the initial implant process utilizing Beacon Object Files (BOFs) or frameworks that use standard Windows APIs.

BOFs were first implemented inside Cobalt Strike, but multiple frameworks have adopted the ability to execute them. The power of BOFs for reconnaissance is removing cross-process injection, running other Windows binaries from cmd.exe, or dealing with PowerShell. TrustedSec has several deep dive blogs on this topic from basics to development:

A DEVELOPER'S INTRODUCTION TO BEACON OBJECT FILES

https://www.trustedsec.com/blog/a-developers-introduction-to-beacon-object-files/

SITUATIONAL AWARENESS BOFS FOR SCRIPT KIDDIES

https://www.trustedsec.com/blog/situational-awareness-bofs-for-script-kiddies/

BOFS FOR SCRIPT KIDDIES

https://www.trustedsec.com/blog/bofs-for-script-kiddies/

CHANGES IN THE BEACON OBJECT FILE LANDSCAPE

https://www.trustedsec.com/blog/changes-in-the-beacon-object-file-landscape/

COFFLOADER: BUILDING YOUR OWN IN MEMORY LOADER OR HOW TO RUN BOFS

https://www.trustedsec.com/blog/coffloader-building-your-own-in-memory-loader-or-how-to-run-bofs/

Playbook

Let’s start building out an initial playbook for information we want to gather. This is in no way complete but will allow us to start building a repeatable workflow and supporting tooling.

Host Based Configuration

- Is it domain joined, hybrid, cloud joined, or standalone?

- Is auditing configured?

- Are there logs shipped off system?

- Who uses the system, and where do they fit into the organization?

- How stable is the system?

- Does the system crash, and if so, how often?

- Is the system suspended or shutdown at the end of the workday?

- History of profiles of users that use the system

- Connections and connection history

- Tokens and credentials under the context under which we are running

- Proxy configuration

- User behavior analytics

Installed Software

- What applications does this user live in?

- Outlook?

- Browser of choice version

- For recon and user-agent mirror for C2

- Credentials and cookies

- Thick clients?

- Electron apps vulnerable to token stealing

- Can we tell how this system is managed from installed software?

- EDR/AV Products

- Exclusions

- Configuration level when possible

- Identifying EDR products in a more passive manner, you can pass a directory listing of C:\Windows\System32\Drivers into https://gist.github.com/HackingLZ/b7e5ef65524bb986c16882ef534715c4.

- This will look up the drivers based on name and match the corresponding altitude number range https://learn.microsoft.com/en-us/windows-hardware/drivers/ifs/allocated-altitudes. For example, AV products are within the range 320000 - 329998.

- Application Control

- Built-in capabilities - third party or both

- Driver Enumeration

- Altitude numbers/defensive products

- Outdated, hardware-specific IE Dell

- Vulnerable drivers that can be abused

- VPN Client

- Common Priv Esc path

- Is it connected? Many enumeration tasks may depend on whether there is a connection to the corporate network.

- Password Storage - LastPass/KeePass

- Office Version

- Security features and defaults vary from version to version.

- Printers - Large printers are a great source of LDAP or pivoting.

Common Folders

- Desktop

- Downloads

- Temp

- Trusted Locations - https://learn.microsoft.com/en-us/deployoffice/security/trusted-locations

- C:\ - Organizations often have standard build/tool folders here.

Honey Documents

There are a few defensive products that plant document files which trigger an alert on access and are often marked as such to stop automated ransomware activity. These are usually easy to spot. Alternately, there is a rise in documents that contain a canary token which trigger upon opening of the document. We are able to identify these documents with methods such as this example PoC: https://gist.github.com/HackingLZ/8fed5fa4983b63b773380e1a8e82478a

Ideally, any documents you take offline are opened in an isolated VM; however, identifying the presence of honey documents can increase your caution level when it comes to accounts and other areas.

Chat logs

- Slack

- Teams

Video Conferencing Software

- Webex

- Zoom

Email - C:\Users\UserName\AppData\Local\Microsoft\Outlook

- Help Desk/IT emails

- Signatures

- Emails with files attached

- Upload/Download portals

- Emails to template for phishing pretexts

- GAL

- MFA products

Browser

- Bookmarks

- Internal resources

- Employee lookup tools

- SharePoint/Wikis

- Secure Secrets/Password Storage

- Network documentation

- IP documentation

- IT Support/Help Desk

- Plugins

- External resources (future pretexts)

- 401(k)

- Payroll

- eLearning

Stored Credentials

- Password reuse is obvious

- Password patterns

- Overlap with domain password

Job Role Specific Tools

- WSL

- Python

- Visual Studio

- AWS CLI tools

- Putty

- Logs/Keys

Other Areas of Interest

- DNS cache

- PowerShell history

- APPDATA\Microsoft\Windows\PowerShell\PSReadLine\ConsoleHost_history.txt

The last part of on-host reconnaissance and something to always consider is cohabitation. The topic of overlapping events while testing is not discussed often, but it does happen. There is always a chance a threat actor could compromise a system before any testing activities, or potentially, a previous vendor could leave behind artifacts from a penetration test or red teaming. It is often more common on Internet-facing systems like legacy web servers. If you’re going down the path of building some of the host-based reconnaissance into your toolsets, then including checks for standard persistence methods, odd processes, and other IOCs could go a long way in helping clients identify an event that otherwise went unnoticed. Adding features to recognize cohabitation doesn’t reduce the need for proactive threat hunting and other blue team activities, however.

Off-Host Normal Network Behavior

This is great opportunity to make use of SOCKS features in implants. If we don’t have the users' passwords, there are BOFs and other methods to prompt the user for their password, which is often effective and goes unreported or detected.

Cloud File Storage

- OneDrive, Box, Dropbox, SharePoint, Azure Storage Accounts, AWS S3 Buckets

- Potential mirror vendor for large file exfiltration

Review of bookmarks from previous phase

Internal Employee Lookup Tools

- Common in large organizations where internal employee lookup tools are built and are often backed by LDAP or other internal technologies and very rarely, if ever, are monitored when you compare it to something like AD directly.

File Shares related to job role

NETLOGON/SYSVOL

- Netlogon scripts for some reason keep giving

- Mapped drives

- Stored scripts

Azure Enumeration

- Graph API

- Look for cookies and try to extract the PRT (Primary Refresh Token).

- Bearer token extraction and reuse

Other Cloud Platforms

- Depending on the type of user and applications, we can look for authentication tokens, keys, and passwords.

- What services are being accessed?

ServiceNow

Centralized Knowledge Bases

- SharePoint

- Confluence

- Wikis

Help Desk Tickets/Systems

- Can we find support tickets that may contain sensitive information by browsing the system under our current context?

- Can we find ticket information in emails?

GitLab/SVN

- Be aware, mass cloning browsing usually goes unnoticed, but users often have limited access.

Revisit Chat platforms to search for credentials across channels.

Fin

The goal here wasn’t to build an inclusive list of every possible option but to help start building a post-exploitation methodology for gathering decision-making data.

At a minimum, we understand what defensive controls are on a workstation and the installed programs, versions, and other information with which we can start making informed decisions. Hopefully by the time you're done with situational awareness, you can answer questions such as:

- What is the risk vs. reward of elevating privileges on the current system?

- Are we better off moving on from this machine or switching it to a long-term C2 host?

- How are the systems managed?

- What did I learn from bookmarks?

- Are there any emails I can use for follow-on pretexts? What file types are included with emails or links?

Attack paths are often highlighted in the MITRE IDs an attacker used, but the decisions about why they avoided one for the other are less discussed and will be topics of future blogs. Even behavior that could be written off as basic might have been done for a particular reason, like being evasive.

Lastly, a huge thanks to Carlos Perez, Jason Lang, Edwin David, and Paul Burkeland who contributed comments, suggestions, and methodology ideas to this post.